Fast Forward

Thesis

I'm working on game AI for social engagement - an engine for conversational NPCs, optimized for believability, authorability, and efficiency.

The dissertation is on modeling interaction using a hierarchy of parallel hidden Markov models. Each HMM in the network models a particular aspect of the interaction (say, one of the sub-threads of the given situation), and all models run concurrently. The resulting system allows for simultaneous causal dependence and temporal independence between the models, leading to a better interaction with softer failure modes.

The Breakup Conversation. The first demo is a simulation of a

potentially stereotyped yet very emotionally laden situation: a conversation

that accomplishes a break-up. The computer has the knowledge of some

stereotyped interactions that people get into in such situations - the "why are

you doing this to me", the "please I can change", and so on - and the player's

role is to successfully maneuver through the maze of accusations and guilt

attacks. The title is a stress-test of the technology, designed to show how to

apply conversation in circumstances that traditionally pose great technical

difficulties.

The Breakup Conversation. The first demo is a simulation of a

potentially stereotyped yet very emotionally laden situation: a conversation

that accomplishes a break-up. The computer has the knowledge of some

stereotyped interactions that people get into in such situations - the "why are

you doing this to me", the "please I can change", and so on - and the player's

role is to successfully maneuver through the maze of accusations and guilt

attacks. The title is a stress-test of the technology, designed to show how to

apply conversation in circumstances that traditionally pose great technical

difficulties.

Available for download are: a playable demo of the Breakup Conversation, as well as a movie (.wmv, 14mb).

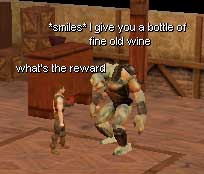

Xenos

the Innkeeper. The second demo, on which I'm working right now, is an

NPC character for the role-playing game Neverwinter Nights. The character is an

RPG classic: an innkeeper. The player can talk with him to buy things, and the

agent will have some information about quests in which the player can engage.

The information about what the player can do will come out in the course of the

conversation, just like with actual human-driven characters.

Xenos

the Innkeeper. The second demo, on which I'm working right now, is an

NPC character for the role-playing game Neverwinter Nights. The character is an

RPG classic: an innkeeper. The player can talk with him to buy things, and the

agent will have some information about quests in which the player can engage.

The information about what the player can do will come out in the course of the

conversation, just like with actual human-driven characters.

You can watch it in action by downloading the Xenos Demo movie (.wmv, 8mb).

The Xenos demo uses Shadow Door, an interface module I wrote with Phil Saltzman that lets one connect arbitrary external code to Neverwinter Nights, to control an NPC behavior.

Games

In addition to the thesis work, I also have a few other game AI projects on my conscience.

tt14m, the trash-talking 14 year old moron, uses simple text

processing to attempt engagement in the social aspects of playing the game

Counter-Strike. tt14m is a cheap and efficient system that attempts to

model the two most common, and arguably simplest, characteristics of players'

social interactions: displays of emotional involvement in the game, and verbal

posturing.

tt14m, the trash-talking 14 year old moron, uses simple text

processing to attempt engagement in the social aspects of playing the game

Counter-Strike. tt14m is a cheap and efficient system that attempts to

model the two most common, and arguably simplest, characteristics of players'

social interactions: displays of emotional involvement in the game, and verbal

posturing.

More comprehensive description of the system can be found in Aaron's and mine recent article "Applying Inexpensive AI Techniques to Computer Games", on the publications page.

being-in-the-world is an intelligent agent capable of living autonomously in a MUD world. Its hybrid architecture merges behavior-based reactive layer with a symbolic problem-solver, and the agent makes simple inferences about the world while trying to cope with novel situations.

This work was presented at the 2001 AAAI Symposium on Artificial Intelligence and Interactive Entertainment.

AlphaSkaarj is an autonomous monster for the first-person shooter game

Unreal. In place of the standard finite-state machine for behavior control, it

uses methods from behavior-based robotics to drive all behaviors in parallel,

so that all of them would always be active, but only one or more would actually

drive the creature. This programming style, and the close coupling of

perception and action, are very much inspired by studies of animal behavior.

AlphaSkaarj is an autonomous monster for the first-person shooter game

Unreal. In place of the standard finite-state machine for behavior control, it

uses methods from behavior-based robotics to drive all behaviors in parallel,

so that all of them would always be active, but only one or more would actually

drive the creature. This programming style, and the close coupling of

perception and action, are very much inspired by studies of animal behavior.

Presented at 1999 AAAI Symposium on Artificial Intelligence and Computer Games.

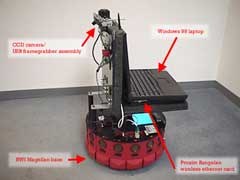

Robotics

In my previous life, I was a roboticist. Most recently I worked in the

GRACE team - short for the Graduate Robot Attending Conference in

Edmonton, 2002. Demoed at IJCAI 2002, the robot started at the conference

hall entrance, guided by voice instructions it found its way to

the registration desk, registered itself for the conference,

navigated autonomously through the expo floor, got to a demo area, and

delivered an extemporaneous talk about itself, along with

PowerPoint slides. Ian's and mine contribution was the last module - a system

for behavior-based, situated explanation production, grounded

in navigation through the powerpoint presentation. GRACE won the Ben

Wegbreit Award at IJCAI, and we got some good coverage in

The New York Times,

BusinessWeek,

and elsewhere.

In my previous life, I was a roboticist. Most recently I worked in the

GRACE team - short for the Graduate Robot Attending Conference in

Edmonton, 2002. Demoed at IJCAI 2002, the robot started at the conference

hall entrance, guided by voice instructions it found its way to

the registration desk, registered itself for the conference,

navigated autonomously through the expo floor, got to a demo area, and

delivered an extemporaneous talk about itself, along with

PowerPoint slides. Ian's and mine contribution was the last module - a system

for behavior-based, situated explanation production, grounded

in navigation through the powerpoint presentation. GRACE won the Ben

Wegbreit Award at IJCAI, and we got some good coverage in

The New York Times,

BusinessWeek,

and elsewhere.

Cerebus was our group's

'self-demoing' robot, and what inspired our work on

Grace. In addition to being able to autonomous action selection

and navigation in the world, the robot could give a

dynamically-constructed PowerPoint presentation about itself, and was able to

field some basic questions while demonstrating specific capabilities on demand.

The system was an attempt to extend parallel-reactive architectures to perform

higher level cognitive tasks. Cerebus was demonstrated at the 2000-01 AAAI

Conferences, as well as the 2000 Fall Symposium on Parallel Cognition, and won

the Nils Nilsson Award for Integrating AI Technologies.

Cerebus was our group's

'self-demoing' robot, and what inspired our work on

Grace. In addition to being able to autonomous action selection

and navigation in the world, the robot could give a

dynamically-constructed PowerPoint presentation about itself, and was able to

field some basic questions while demonstrating specific capabilities on demand.

The system was an attempt to extend parallel-reactive architectures to perform

higher level cognitive tasks. Cerebus was demonstrated at the 2000-01 AAAI

Conferences, as well as the 2000 Fall Symposium on Parallel Cognition, and won

the Nils Nilsson Award for Integrating AI Technologies.

Our group also worked with Kluge and Hack, our previous robots (may they rest in peace!), and on a Scheme-based programming environment for the Sony Pet Robots (aka the AIBO dogs), to both of which I contributed navigation and infrastructure code.

Other

The Portal is an interactive installation at the Block Gallery and Museum in Evanston, designed and built by a group from the Center for Art and Technology . The Portal is a large wall display of a cellular automata simulation, which is modified by input from a camera monitoring the audience. The growth and death of automata is governed by the audience's interaction with the piece as much as by the rules of the simulation itself. My contribution was in the design of the piece, and in the writing the visual display software.

Video recording of the installation is available here (QuickTime format, 15.8Mb).